“Self-report captures 27 distinct categories of emotion bridged by continuous gradients” by Cowen and Keltner (2017) in PNAS has raised interest but also ridicule. Sure, if your preconception is that discrete theories are bs anyway, then “there are 27 categories instead of the traditional six!” may seem funny. But I have argued (Kivikangas, in review; see also Scarantino & Griffiths, 2011) that discrete emotion views (not the same as basic emotion theories) have their place in emotion theory, and I find a lot of good in the article – as long as it is kept in mind that it is about self-reports of emotional experiences.

To summarize, the participants were shown short video clips, and different ratings of emotional experiences resulted in a list of 27 semantic categories that overlap somewhat, implying that both discrete and dimensional views are right. The sample could have been bigger, the list they started with seems somewhat arbitrary, and the type of stimuli probably influences the results. But the article supports many of my own ideas, so my confirmation bias says it’s valid stuff.

In a bit more detail:

- They had 853 participants (which, IMO, they should mention in the main text as well) from MTurk watching in total 2185 videos (5 s on average) and judging their feelings. The participants were divided into three groups [1]:

- first group provided free responses to 30 randomly assigned videos (although the supporting information says this was not entirely free, but a predefined set of 600 terms which were autocompleted when the participant typed into a blank box);

- second group rated 30 videos according to a predefined set of 34 discrete categories (had to choose at least one, but could choose more – apparently this choice was dichotomous) the authors had gathered from bits and pieces around the literature;

- third group rated 12 videos videos according to a predefined set of 14 dimensional [2] scales (9-point Likert scale).

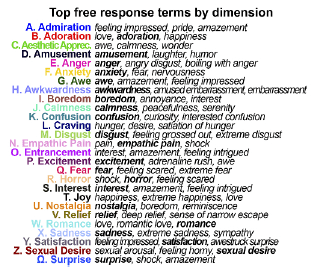

- I don’t pretend to know the statistical methods so well I could vouch for their verity [3], but the authors report that 24 to 26 of the 34 discrete categories from the second group were independent enough [3] to rate the videos reliably. The “free” responses from the first group provided 27 independent [5] descriptions, that were then factored into the 34 categories to find out the independent categories. Apparently these three analyses are taken as evidence that categories beyond the 27 are redundant (e.g. used as synonyms; statistically not reliably independent).

- Their list above (Fig. 2C) dropped the following from the original 34 categories: contempt and disappointment (coloading on anger), envy and guilt (unreliable, but not loading on any other factor), pride [6] and triumph (coloading on admiration), and sympathy (coloading on empathic pain and sadness). I discuss the list and these dropped categories below.

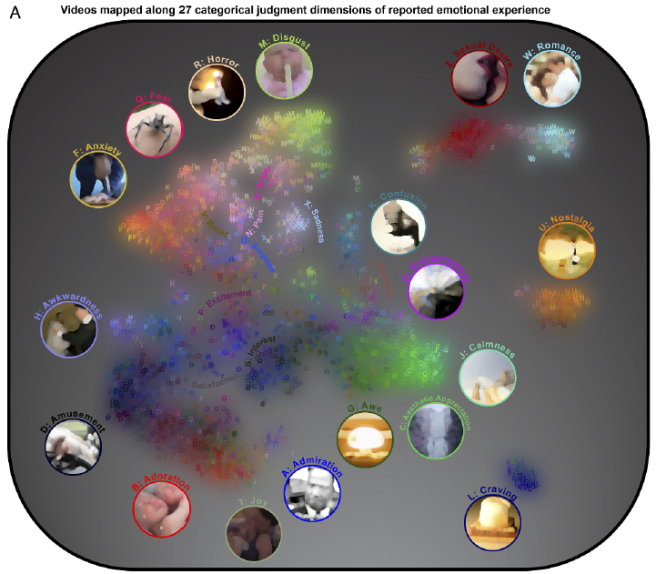

- These categories are not completely distinct, but smoothly transition to some (but not any!) of the neighboring categories, e.g. anxiety overlaps with fear, and fear overlaps with horror, but horror does not noticeably overlap with anxiety. When the 27 mathematical dimensions these categories are loading are collapsed into two, we get this map:

- The map is also available in higher resolution here, and it’s interactive! You can point at any letter and see a gif of the rated video!

- The authors compare these categories to the 14 dimensions from the third group, and report that while the affective dimensions explained at most 61 % of the variance in the categorical judgment dimensions, the categories explained 78 % of the variance in the affective dimension judgments. When factored into the categorical data, valence is the strongest factor, “unsafety+upswing” is the second (I read this as threat + excited arousal), and commitment (?) is the third.

- A final claim is that the emotion reports are not dependent on demographics or personality or some other psychological traits (except perhaps religiosity).

The article begins with the remark that “experience is often considered the sine qua non of emotion”, and the general language in the article firmly places it on the experiential level: the study does not focus on the hypothesized psychological processes behind experiences, nor on the neural structures or evolutionary functions comprising the whole affect system. I mention this specifically, because IMO the inability to differentiate between these different levels is one of the main reasons the wide range of emotion theories seem so incompatible (which is one of the main points in Kivikangas, in review). The article recognizes the limits of the semantic approach admirably without making overextending claims, although in the discussion they do speculate (pretty lightly) about how the findings might relate to the neural level. However, although the authors avoid overextending and list relevant limitations (should be studied with other elicitors, other languages and cultures, etc.), the paper is probably still going to be read by many people as a suggestion that there are 27 strictly discrete categories (no, they are somewhat continuous) of emotions (no, these are only self-reports of emotional experiences – and even self-report “is not a direct readout of experience”, as the authors point out).

Furthermore, I like the position (similar to my own) of saying that – although Keltner is a known discrete theorist – both discrete and dimensional views have some crucial parts right, but that the strictest versions are not supported (“These findings converge with doubts that emotion categories “cut nature at its joints” (20), but fail to support the opposite view that reported emotional experiences are defined by entirely independent dimensions”). The authors also start from the astute observation that “the array of emotional states captured in past studies is too narrow to generalize, a priori, to the rich variety of emotional experiences that people deem distinct”. Another point I recently made (Kivikangas, in review) was that although the idea of early evolutionary response modules for recurrent threats and opportunities is plausible, that the number of these modules would be the traditional six, or even 15 (Ekman & Cordaro, 2014), is not. My view is that affects (not experiences, but the “affect channels” of accumulating neural activation; Kivikangas, 2016) are attractor states, produced by a lot larger number (at least dozens, probably hundreds) of smaller processes interacting together. And definitely there is no reason to believe that the words of natural language – typically English in these studies – would describe them accurately (as pointed out by, among others, Russell, 2009, and Barrett, pretty much anything from 2006 onwards).

So there is a lot I like in the article. However, some obvious limitations they do not explicitly state. First, they begin from a list of 34 terms from different parts of the literature, which is a wider array than normally used, but still rules out a lot of affective phenomena. From my own research history, media experiences have other relevant feelings, like frustration or tension (anticipation of something happening, typically produced with tense music). Of course one can say that those are covered by anger and anxiety, for example, but I would have to point out the relatively small number of participants – the factors might be different with another sample. (A side point is that while this seems to be a fine study, for a more specific purpose, such as for a wider use in game research, one would probably want to conduct their own study with that particular population, because the usage would probably be different.)

A theoretically more interesting point is that they include categories like nostalgia and sexual desire, and even craving and entrancement, which many theorists would argue vehemently against in a list of emotions. Me, I am happy for their inclusion as I think that “emotion” is a somewhat arbitrary category anyway and if we are looking at the affective system as a whole, we note a lot of stuff that are certainly affective but are not thought as emotions (one more point I made in Kivikangas, in review…; also mentioned by Russell, 2009). But it raises a question why many other less traditional categories were not included. Schadenfreude, bittersweet, moral anger/disgust (interestingly, one of the dimensions was “fairness”)? What about thirst? Could be included in craving, but we don’t know, because it wasn’t in the study. I have stated (Kivikangas, 2016) that startle is affective, as is the kind of curiosity to which Panksepp refers as “seeking” (Panksepp & Biven, 2012). Would the youtube generation differentiate between amusement and lulz? Naturally, some decisions must be made what to keep and what to ignore, but if they were going for the widest possible array (with things like nostalgia, craving, and entrancement), I think it could be still considerably wider. I have not looked at the list of 600 “free” responses, but apparently the authors checked which of the 34 categories were supported by the free responses, but did not check what other potentially relevant they might have included.

A second obvious limitation is the stimulus type: 5-sec (on average) video clips. The authors state that this should be studied with other kind of elicitors, sure, but they don’t explicitly mention that maybe some of their results are due to that. Specifically, the reason for dropping quite common emotions – contempt, disappointment, envy, guilt, pride, triumph, and sympathy – from their list might be that they (at least some of them) need more context. Guilt, pride, and triumph are related to something the person does, not something they simply observe in third person. Contempt is related to a more comprehensive evaluation of target personality, and envy relates to one’s own possessions or accomplishments. Actually, I was surprised that they found anger, which may also be difficult to elicit without context (as anger traditionally is thought to relate to person’s own goals) – but indeed, in the supporting information it was the second next category to drop when they tested models with less (25) factors. I suspected that there might be clips with angry people and that participants had recognized anger instead of felt it, but this seems to not be the case. Clips present in the interactive map classified with E for anger are either unjustified and uncalled-for acts of violence, or Trump or Hillary Clinton – which probably are closer to moral anger than the traditional blocked-goals anger. Anyhow, the list of found factors would be even longer if the type of stimulus did not limit it.

As a conclusion, although I have been more interested in the affective system underlying the emotion experiences and haven’t seen much point in the arguments over whether the experience can be best described as discrete emotions or dimensions, the empirical map combining aspects of both is much more plausible to me than a strictly discrete list or a too tidy circumplex. And even though the reports of (a priori restricted) emotions are not the same as the affective system underlying them, I am hopeful that this paper helps the discussion that perhaps the different models are not incompatible, and that perhaps the models may be different on different levels of scrutiny (i.e. experience vs. psychological vs. neural vs. evolutionary).

Footnotes

[1] The numbers are a bit unclear. The authors flaunt: “these procedures yielded a total of 324,066 individual judgments (27,660 multiple choice categorical judgments, 19,710 free-response judgments, and 276,696 nine-point dimensional judgments”.

They say that “Observers were allowed to complete as many of versions of the survey as desired, with different videos presented in each”, and that “Each of

the 2,185 videos was judged by 9 to 17 observers in terms of the 34 categories”, but repetitions per participant or per video for other response types are unclear. Without prior knowledge then, 853 in 3 groups = 284 participants per response type, which is barely above what Lakens & Evers (2014) say is needed for finding a stable (see quotation below) small effect size (r = .1; required n = 252), but below what is required for 80 % power (n = 394) for that effect size. According to within-subjects power calculations I remember, 9-17 repetitions per video does not really help the power almost at all.

“With a small number of observations, effect size estimates have very wide CIs and are relatively unstable. An effect size estimate observed after collecting 20 observations can change dramatically if an additional 20 observations are added. An important question when designing an experiment is how many observations are needed to observe relatively stable effect size estimates, such that the effect size estimate will not change considerably when more participants are collected.” Lakens & Evers, 2014, pp. 279

[2] Mostly appraisal dimensions in addition to traditional dimensions: approach, arousal, attention, certainty, commitment, control, dominance, effort, fairness, identity, obstruction, safety, upswing, valence.

[3] One thing I found weird was the median-split correlation for demographics and other traits. They used it to show that traits do not explain differences in emotional responding, but a quick googling only shows recommendations that median-splits should not be used because it loses a lot of information. I hope this is not a sign that the method has been used purposefully in order to find no differences.

[4] “Using SH-CCA we found that between 24 (P < 0.05) and 26 (P < 0.1) statistically significant semantic dimensions of reported emotional experience (i.e., 24–26 linear combinations of the categories) were required to explain the reliability of participants’ reports of emotional experience in response to the 2,185 videos.” I don’t immediately understand how this method works.

[5] “In other words, we determined how many distinct varieties of emotion captured by the categorical ratings (e.g., fear vs. horror) were also reliably

associated with distinct terms in the free response task (e.g., “suspense” vs. “shock”). We did so using CCA, which finds linear combinations within each of two sets of variables that maximally correlate with each other. In this analysis, we found 27 significant linearly independent patterns of shared variance between the categorical and free response reports of emotional experience (P < 0.01), meaning people’s multiple choice and free-response interpretations identified 27 of the same distinct varieties of emotional experience.”

[6] Fig. 1 and its caption shows pride not loading to its own factor and relief loading, but the text talks about these vice versa, and relief is in other figures, so most likely figure 1 is mistaken.

References

Barrett, L. F. (2006). Are Emotions Natural Kinds? Perspectives on Psychological Science, 1(1), 28–58. https://doi.org/10.1111/j.1745-6916.2006.00003.x

Cowen, A. S., & Keltner, D. (2017). Self-report captures 27 distinct categories of emotion bridged by continuous gradients. Proceedings of the National Academy of Sciences, 114(38), E7900–E7909. https://doi.org/10.1073/pnas.1702247114

Ekman, P., & Cordaro, D. (2011). What is meant by calling emotions basic. Emotion Review, 3(4), 364–370. https://doi.org/10.1177/1754073911410740

Kivikangas, J. M. (2016). Affect channel model of evaluation and the game experience. In K. Karpouzis & G. Yannakakis (Eds.), Emotion in games: theory and praxis (pp. 21–37). Cham, Switzerland: Springer International Publishing. Retrieved from doi:10.1007/978-3-319-41316-7_2

Kivikangas, J. M. (in review). Negotiating peace: On the (in)compatibility of discrete and constructionist emotion views. Manuscript in review.

Lakens, D., & Evers, E. R. (2014). Sailing from the seas of chaos into the corridor of stability practical recommendations to increase the informational value of studies. Perspectives on Psychological Science, 9(3), 278–292.

Panksepp, J., & Biven, L. (2012). The archaeology of mind: neuroevolutionary origins of human emotions. New York, NY: W.W. Norton & Company, Inc.